What intelligent automation is and what it is not

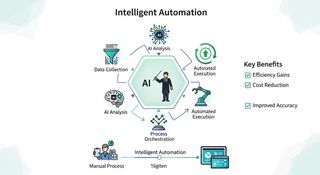

Intelligent automation does not mean adding a layer of AI to any process and expecting magic. In a business context, it means combining automation, data, and interpretation so that certain tasks can be executed with less friction, fewer errors, and more consistency.

In practice, this may mean a system classifying emails, extracting data from a document, generating a first draft, or triggering an internal workflow without a person having to complete every step manually. The key point is this: not everything should be automated, and not every automation effort needs AI. When there is too much variability, too much risk, or unreliable sources, the value is not in automating more, but in deciding more carefully what should be automated and what should not.

That is why, rather than talking about technology in the abstract, it is more useful to talk about operational judgment: where there is repetition, friction, wasted time, or variability worth reducing.

Where it creates real value inside a business

Intelligent automation tends to work best where there is volume, repetition, and a clear decision logic. There is no need to start with a large project. In many cases, value appears first in very specific points of day-to-day work.

This is especially true in administrative and operational processes where patterns repeat over and over again: email triage and follow-up, data entry and validation, repetitive document preparation, information handoffs between tools, or internal reminders and follow-ups. These are tasks that consume time, generate manual errors, and overload teams without creating much differentiating value.

It also creates strong value in internal knowledge and documentation. When teams lose time looking for information, asking the same questions repeatedly, or working from different versions of the same document, an internal assistant grounded in clear sources can remove a great deal of noise. This is often where value appears in environments with procedures, quality systems, internal support, or dispersed documentation.

There is also a third clear area: quality, validation, and draft preparation. When a system helps prepare drafts, checklists, or structured summaries with human review, it is not replacing judgment. It is reducing preparation time and making the first steps more consistent.

When a company identifies two or three points like these, it is no longer simply “exploring AI.” It is starting to build operational capability.

What prerequisites you need before starting

One of the most common mistakes is thinking that the first step is choosing a tool. Usually, it is not. Before technology, you need a bit of order.

The first prerequisite is being able to describe the problem with some precision: where time is being lost, where repetition exists, where errors or rework appear, and which team is experiencing the most friction. If the starting point is only “we want to do something with AI,” it is still too early.

The second is having minimally clear sources and process. If information is scattered, duplicated, or lacks a reliable version, any intelligent layer will inherit that chaos. Before automating, you need basic clarity around documents, decision criteria, and control points.

The third is defining limits and validation. Not every process should be left alone. In many cases, the best setup is a combination of source-backed response with human validation, draft-first output, partial automation with checkpoints, or a safe refusal when context is missing or risk is too high. This matters especially when sensitive data, quality decisions, or customer impact are involved.

Common mistakes that block return

Many initiatives fail not because the technology is poor, but because the framing is too broad or too vague.

One common mistake is trying to start with everything at once: indexing every tool, connecting every system, or deploying a solution across the whole company from day one. When that happens, risk increases and quality drops. What usually works better is starting with a small, useful, controllable scope.

Another mistake is confusing a demo with a real capability. A polished proof of concept is not always a usable solution. If there are no clear sources, no validation, no minimum governance, and no real day-to-day usage, the initiative remains a demonstration.

It is also common to measure only enthusiasm. If you are not looking at time saved, volume, error reduction, or actual usage, it is easy to believe an initiative is going well when in reality very little has changed. Return usually becomes visible when you define a concrete unit of work and compare before and after.

Finally, many initiatives fall short because of adoption. Even when the technical layer works, value fades if teams do not understand when to use it, when to escalate, or when to say no. Adoption is not an extra; it is part of the outcome.

How to start with judgment and a tightly scoped pilot

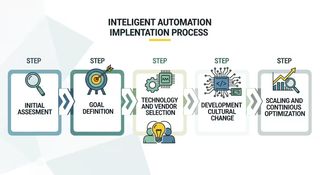

The healthiest way to start is not with a broad promise, but with a small and useful pilot.

The best starting point is usually a very recognizable unit of everyday work: internal documentation queries, preparation of a repetitive draft, data entry between tools, or repetitive administrative and commercial follow-up. The point is not that it looks impressive, but that it is frequent, well-bounded, and important enough to measure afterwards.

Before building anything, it helps to agree on four simple things: how much time this task consumes today, what outcome you want to improve, who validates the output, and in which situations the system should answer, ask clarifying questions, or say no. That agreement prevents many later discussions and makes the pilot easier to evaluate properly.

If you want a more concrete way to structure that decision, this guide on how to choose your first AI pilot without losing months is a useful next step.

At this stage, less is better. It is preferable to work with few but reliable sources, few but real users, one channel, and short iterative feedback loops. That way of starting reduces risk and creates real learning much earlier than a broad rollout.

When it makes sense to scale

Scaling does not simply mean opening it up to more people. It means the pilot has already demonstrated four things: that it solves a real problem, maintains sufficient quality, is understood and used by the people involved, and has a minimum governance and maintenance base behind it.

When that happens, it makes sense to consider expanding sources, teams, or use cases. If it does not, the best next move is not to scale further, but to adjust more carefully.

At that point, intelligent automation stops being an attractive idea and starts becoming a repeatable capability inside the organization.

Conclusion: less broad promise, more operational judgment

Intelligent automation can create a great deal of value, but especially when it is approached with a more useful question than “where can we put AI?”.

The better question is usually another one: where do we have enough repetition, friction, or wasted time to justify a useful, measurable, and controlled pilot?

Starting there makes it much easier to get real results, build internal confidence, and decide what is worth scaling afterward.

If you want to see how this can be applied in a real context, you can explore how we work in services, review solution families, or go directly to contact to assess whether there is a sensible first pilot for your case.

References and context. This article has been reframed using frameworks and analysis published by Forbes / Tungsten Automation, Deloitte, McKinsey, BCG, and Harvard Business Review. External sources are used as market context; the prioritization and implementation lens reflects the project’s current positioning and operating criteria.